Not Sure Which Languages to Choose?

The most expensive mistake in YouTube localization can be translating the wrong video into the wrong language, in the wrong order, and then assuming the market is not there when the views do not come.

We have seen this pattern too many times. A creator picks a random video, adds a translated track, and hopes global growth will just happen. But one translated file does not build momentum. One translated file does not create bingeing. One translated file does not tell YouTube how to place your content in a new market.

That is why some translated videos crawl to 5,000 views while others break 100,000 and open the door to much bigger things.

So, this article is about that difference. About translation as a growth system: starting with metadata, moving through subtitles and dubbing, and ending with the structure that helps a translated video actually travel. Let’s start.

Step 1: Translate the Metadata Before You Touch the Audio

Before a translated video can get 100,000 views, YouTube first has to classify it correctly in a new language environment. The platform’s own statement is clear: translated titles and descriptions help viewers discover videos in their own language, and translated YouTube metadata can show up in search for those viewers.

That is why we treat AI metadata translation as the first and main step.

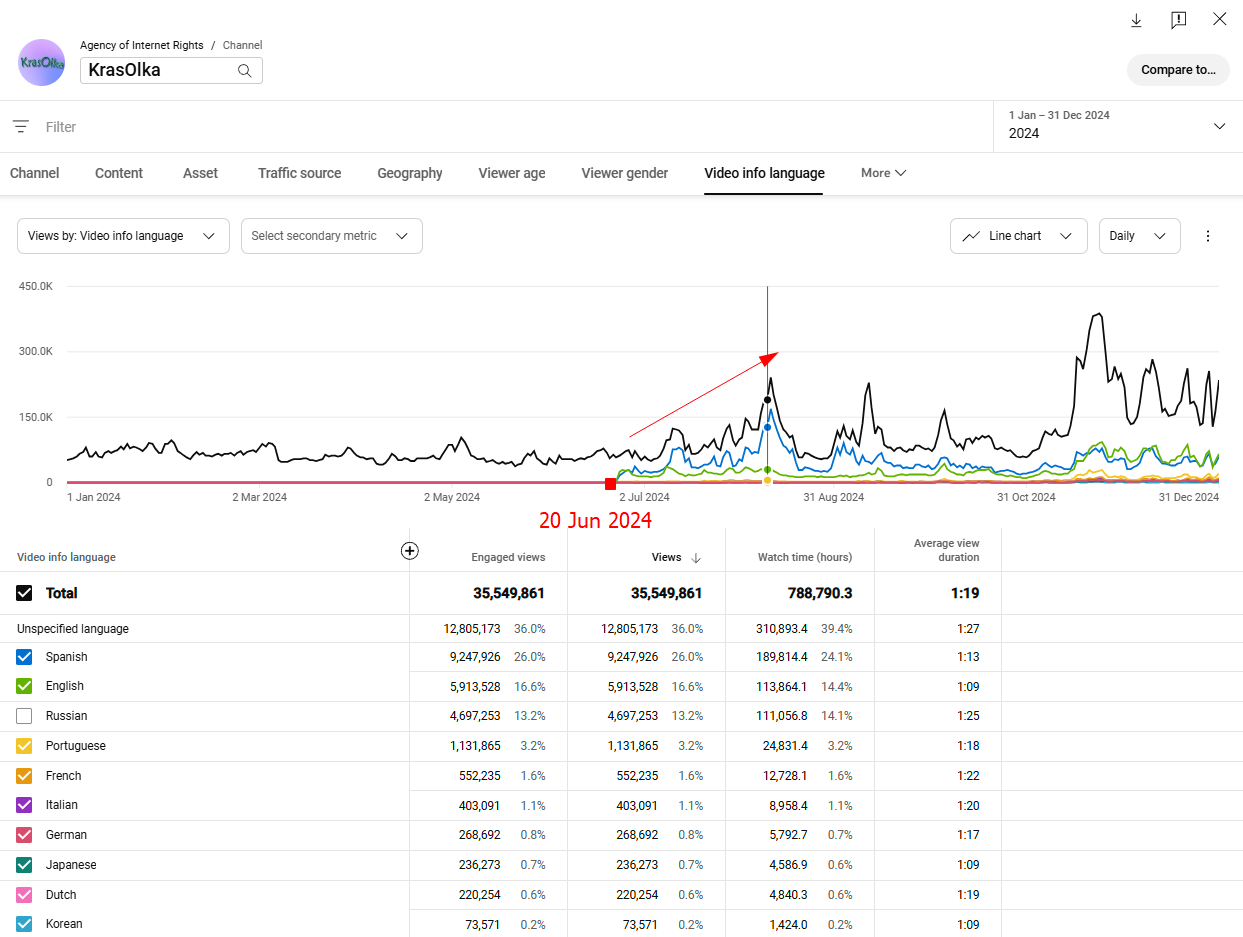

We have seen this repeatedly in AIR cases. Our partner KrasOlka did not start with voice dubbing. We translated their metadata for every video into 9 languages, and over the following six months, the channel grew 148% in views, 97% in subscribers, and 46% in ad revenue.

In this case, metadata translation raised views from 9.15M to 22.7M and reshaped the audience geography.

The USA moved into the #1 spot, Mexico stayed strong, and Argentina, Brazil, Spain, and Colombia all grew, while Russia fell from #1 to #6. That is a strong sign that translated metadata helped YouTube reclassify the content and surface it to new markets.

It’s important because, as of March 2022, views from Russia are not monetized due to sanctions and the suspension of advertising services in the country. As a result, creators do not earn revenue from those views.

So, this was not just a redistribution of views. With the USA at $14.67 CPM, putting it in first place turned the growth into a much stronger monetization opportunity. In general, the channel’s monetization profile improved by about 20.5%, which makes the geography shift almost as important as the traffic growth itself.

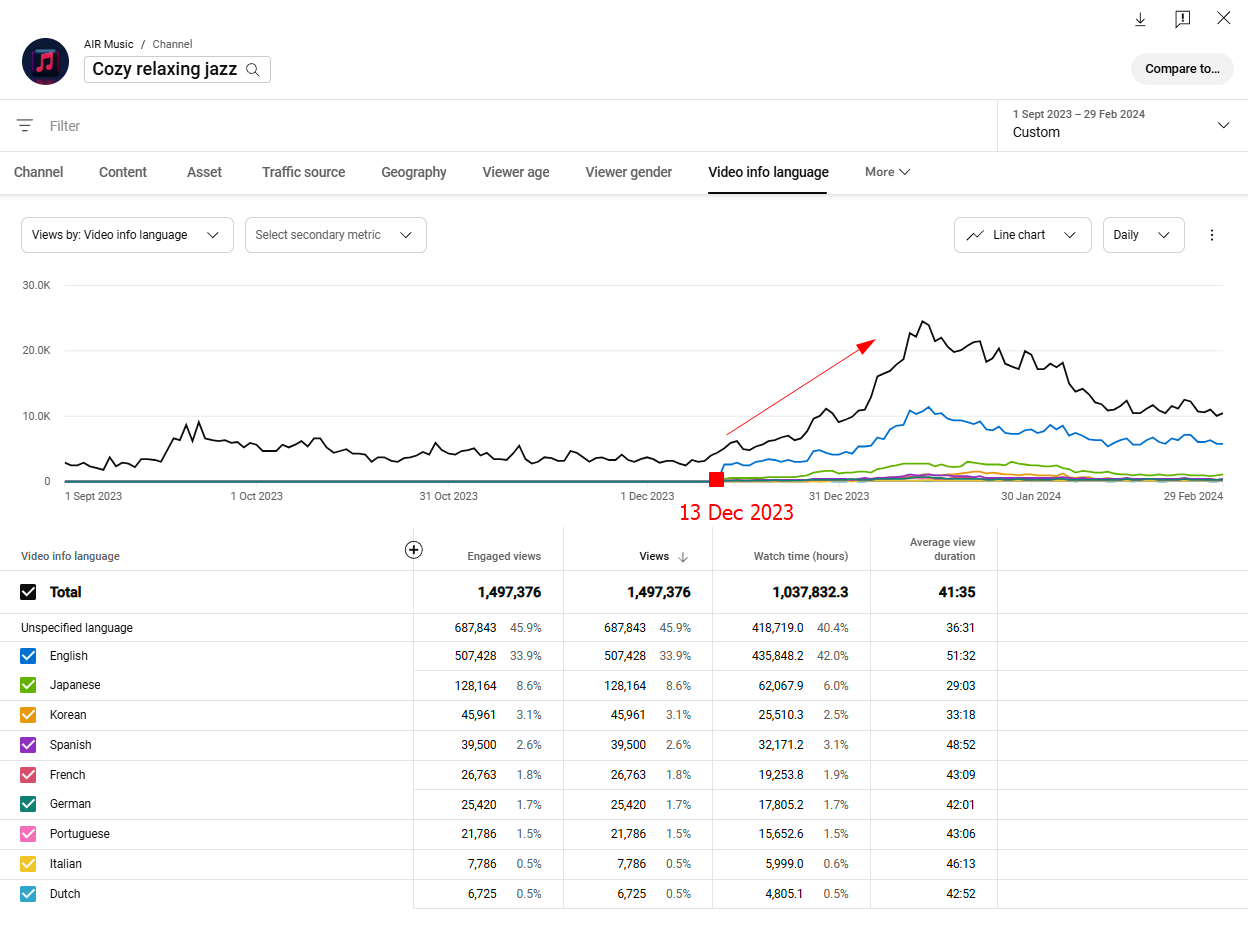

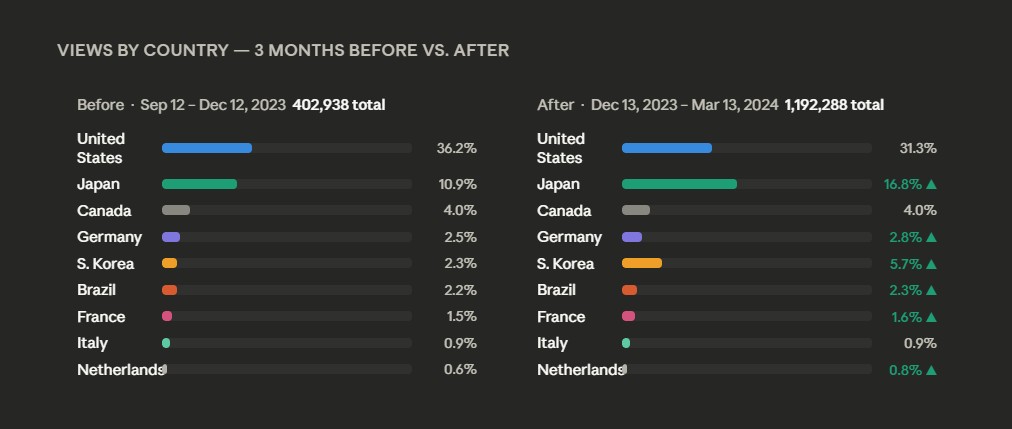

Another partner, Cozy Relaxing Jazz, grew 195% in views, 169% in ad revenue, and 126% in subscribers after metadata was localized into 8 languages; 25% of the channel’s total traffic now comes directly from translated YouTube metadata.

From a monetization perspective, that makes the shift even more meaningful. The market mix stays very healthy: the U.S. at $14.67 CPM, Canada at $9.93 CPM, Germany at $9.79 CPM, France at $6.76 CPM, Japan at $5.68 CPM, and South Korea at $5.73 CPM.

The weighted CPM across the visible countries changes only slightly, from about $6.94 to $6.81, but because total views nearly tripled, the rough revenue potential from those visible markets rises over 3.1x.

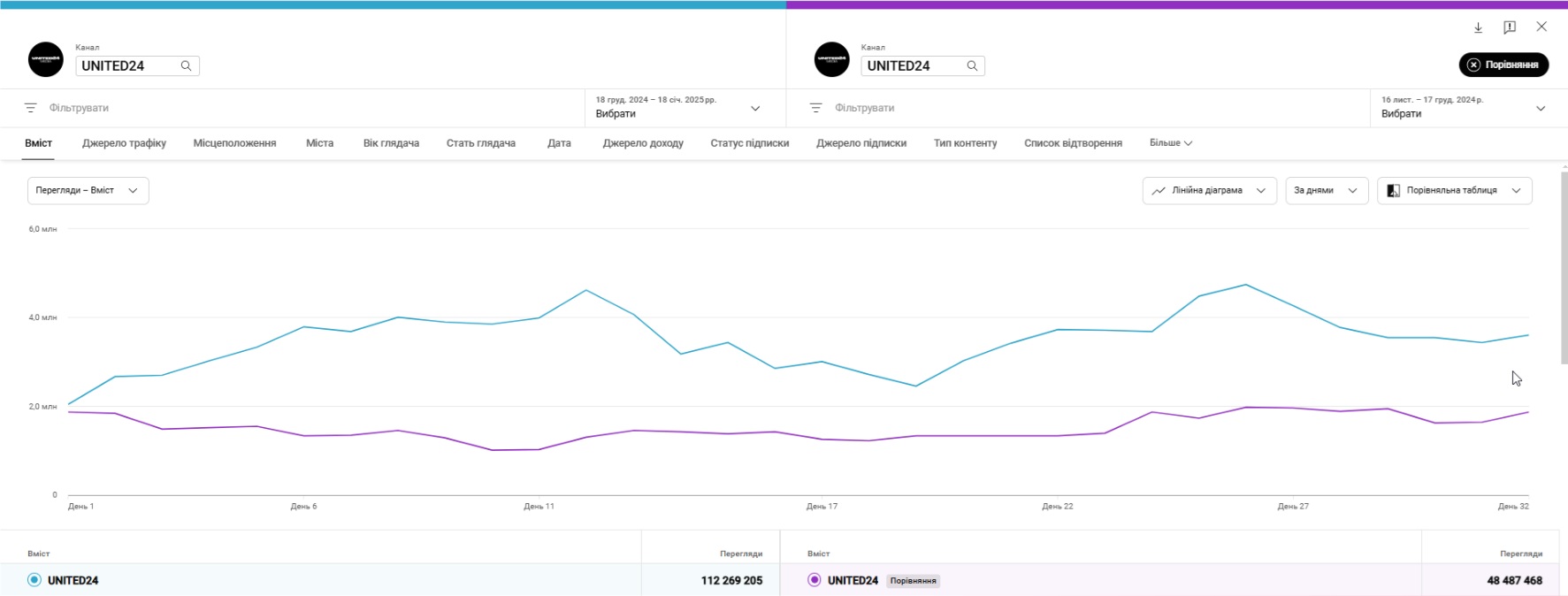

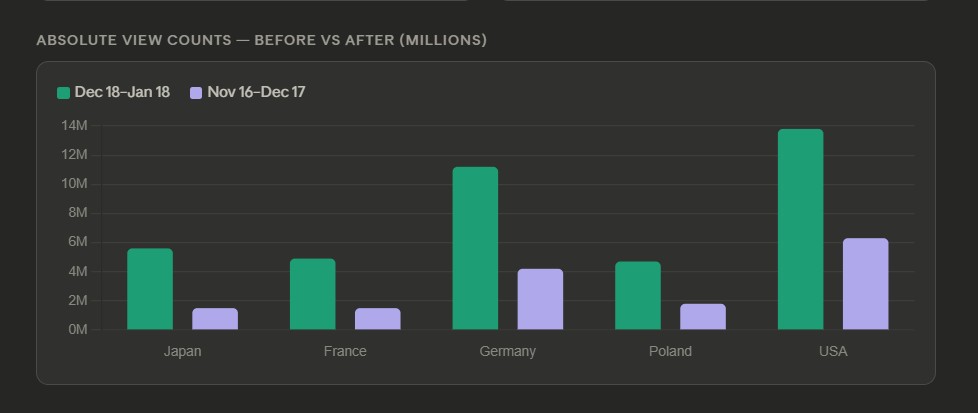

UNITED24 saw an even sharper redistribution: after metadata localization into 10 languages, views jumped from 48 million to 112 million in 30 days, a 131% increase, while revenue rose 52%.

We can see a sharp rise in absolute views across premium markets after metadata translation. The U.S. climbed to nearly 14M views, Germany to 11M+, Japan to 5.6M, France to 4.9M, and Poland to roughly 4.7M. Since these countries also carry relatively strong CPMs — especially the U.S. at $14.67 and Germany at $9.79 — the result was not just more traffic, but a much stronger monetization mix too.

That is exactly what strong metadata translation should do: not just increase reach, but redirect the channel toward markets that are both larger and more commercially attractive.

And that is why we would not start by dubbing your random 50 videos.

We would start by translating the metadata on the videos that already have proof of life.

A good first pass is simple: pick the top 20% of your catalog by views, retention, and evergreen value. Translate titles and descriptions into the markets you want to test. Then watch whether impressions, clicks, and watch time start moving by country. The point is to create the first usable signal.

This is exactly where AI Metadata Translation makes the most sense. It’s a testing layer before dubbing, and the product page says it can translate YouTube titles and descriptions into 200+ languages. AIR also offers the first 100 metadata translations for free, making it a low-cost smoke test before you commit to voice work.

What to do at this step: localize metadata first, measure demand second, and only then decide whether the video deserves subtitles, audio, or a separate channel strategy.

Not sure which language to try first?

We’ll look at your analytics, content format, and audience signals to find the markets with the best growth potential. Reach out to us, and we’ll map out the smartest first move.

Step 2: Use Subtitles to Widen Reach, Then Use Audio to Deepen Retention

Creators often frame subtitles and dubbing as if one has to replace the other. But that is the wrong way to think about it.

Subtitles are often the cheaper reach layer. Dubbing is the retention layer.

YouTube’s help center explicitly recommends translated metadata for discoverability and subtitles for broader accessibility. But in 2026, subtitles do more than help viewers follow along. They also act like a text map that helps YouTube understand what your video is about and where it belongs inside its recommendation system.

The AIR case shows this especially clearly. Instead of translating everything, our team focused on selected strong videos, adding AI-edited subtitles in 20+ languages and translating metadata into key target languages like Spanish, Hindi, Portuguese, Turkish, and Vietnamese.

Comparing two six-month periods, total views rose from 47.7 million to 52.8 million.

About 21.7% of all views came from videos with subtitles, which meant 11 million+ views were directly influenced by subtitled versions. Another 8% of views were tied to AI-translated metadata, which means roughly 4.2 million views came because users found the videos through translated titles, descriptions, or tags.

We saw a similar logic in the AIR kids MLA case. After the first language test, subtitles kept rolling in across 11 languages, while dubbing was limited to the 6 markets that showed the strongest demand. That let the team widen reach first, while keeping dubbing investment focused where retention potential was already visible.

Many channels can get surprisingly far with metadata plus subtitles before audio becomes essential. That is especially true for DIY, visual tutorials, fitness, craft, music, and process-driven formats.

But subtitles only work if they are done properly.

Translation changes text length. English to Spanish often becomes 20–30% longer, while English to Arabic can become much shorter. That affects timing, line breaks, and readability. If you use raw auto-translate output as the final version, the subtitles often drift out of sync with the actual speech. So the better approach is to work with SRT files that are manually corrected or at least AI-corrected and then checked against timing.

That timing matters more than most creators think. The subtitle should appear exactly when the speaker starts and disappear when the line ends. If it lags behind or jumps ahead, viewers feel friction immediately. You can use tools like Amara, Rev, or Descript to tighten start and end times.

Also, test subtitles on mobile, not just desktop. 69% of YouTube traffic comes from phones, so subtitles that look fine on a laptop can feel cramped, tiny, or too fast on a smaller screen.

And if you are using MLA, the subtitle track has to match the dubbed audio track. If the dub says one thing and the subtitle says another, viewers notice. That mismatch breaks trust fast and can hurt retention.

One more important detail: whenever possible, use proper closed captions or subtitle tracks, not burned-in text. Searchable subtitle files help YouTube understand the content better. Burned-in subtitles may help a viewer read, but they are far less useful as a discoverability signal.

So the right sequence is usually:

- metadata first

- subtitles second

- dubbing third

That sequence lowers risk and tells you whether the content is being rejected because viewers cannot find it, cannot understand it, or do not enjoy it enough to keep watching.

What to do at this step: subtitle widely, dub selectively, and let retention decide when it is time to invest more.

Step 3: Run a Small Smoke Test to Pick Languages

The fastest way to waste money in localization is to assume every video deserves every language. That is not how our best cases grow.

The better approach is what we often call a smoke test: a small group of strong videos translated into a broader set of possible markets, just long enough to see where real demand appears.

YouTube’s own Jamie Oliver case followed this logic. The team did not pick languages randomly. They analyzed where viewers were already watching from and what those markets wanted, then began uploading multi-language audio for Spanish- and Portuguese-speaking audiences.

The AIR kids MLA case followed the same principle, but in a more aggressive way.

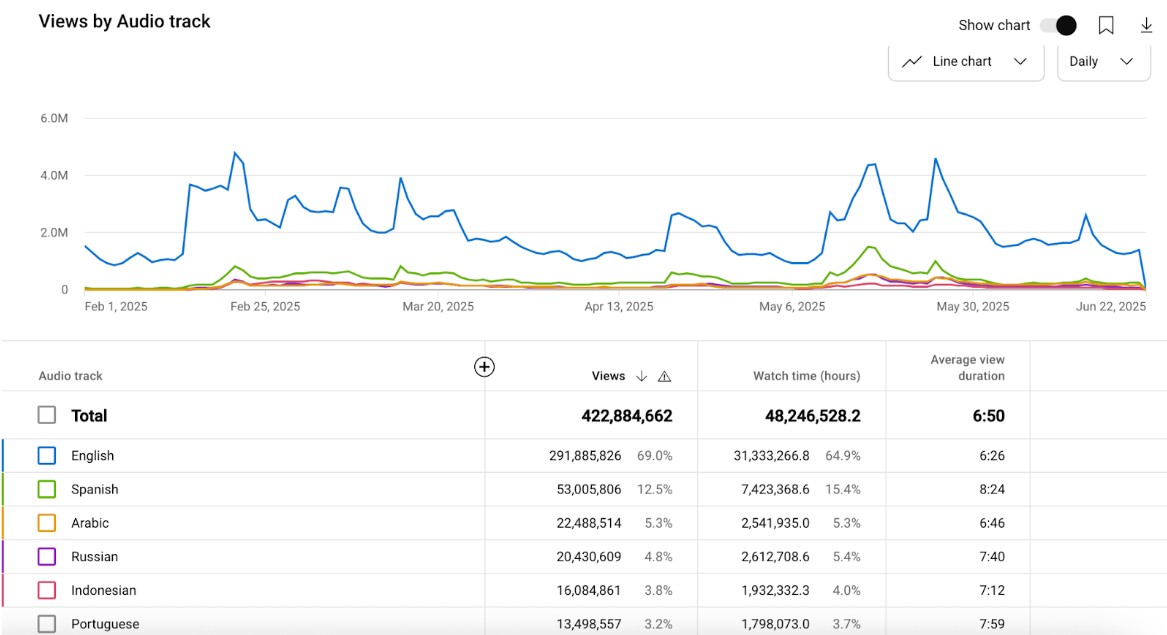

We started with 5 videos and 11 languages: Arabic, Chinese, Spanish, Portuguese, Turkish, Filipino, Japanese, Korean, Vietnamese, Hindi, and Indonesian. Then we watched which ones actually pulled ahead using the ratio of “Views by Country” to “Audio Track Enabled.” Spanish, Arabic, Indonesian, and Portuguese became early winners, with Japanese and Korean strong enough to stay in the core set. Only after that did we scale.

As a result, the dubbed versions added 125.5 million views.

This is the first thing creators need to adopt: do not translate everything because a language looks attractive on paper. Translate enough to get a signal.

If your content is visual, evergreen, or process-led, the smoke test can start with metadata alone. If it is voice-led, emotional, or story-heavy, you will usually need subtitles sooner and dubbing earlier.

But the underlying logic stays the same: test a small batch before you industrialize anything.

Don’t jump straight to “best CPM countries” or “largest languages.” That is only part of the story. The more useful question is: where is your content already showing the first signs of traction?

We would look at four things first: geography inside YouTube Analytics, comment language, retention by market, and whether the content format feels naturally exportable. Then we would split markets into two buckets: high-volume reach markets and high-value monetization markets.

What to do at this step: take 3–5 proven videos, test 5–7 plausible languages, and do not guess winners before the analytics do.

Step 4: Publish MLA Like a System

This is where many translated videos stall.

A creator uploads one dubbed track, sees a small bump, and assumes the job is done. But the upload itself is the easiest part. One translated video is not a system. And if there is no localized path after that first click, growth usually stops there.

Yes, the technical setup is simple. To add multi-language features to your videos, you just need to:

- Open YouTube Studio on desktop, find the video you want to localize.

- Go to Languages (can also be labeled "Subtitles")

- Click Add language, choose the target language

- Then click Add next to Dub (or "Audio").

But the real performance difference comes from what surrounds that upload.

The biggest lesson from AIR’s 125M-view kids case was this: do not localize one video — localize the viewing path. Every new premiere launched with dubbed tracks already in place, and before the next major upload, roughly 70% of the back catalog had already been localized in the winning languages.

That is what created bingeing. That is what stopped viewers from landing on one dubbed video and then running into an English-only wall. And that is what turned one experiment into 125.5 million additional views in 5 months, with 30%+ of total views coming from dubbed tracks.

Here’s what MrBeast has to say about his MLA strategy:

“I used to only do dubs on my new videos, but the problem was we would hypothetically put the Japanese dubs on all our new videos going up, but we never back-dubbed our catalog on my main English channel, and so the people that spoke Japanese would watch the newer videos, but they’d watch the old ones with no dubs, they’d get confused, it hurt session time, and we’d stop being recommended. So, you need to do your whole catalog, not just your recent videos. That way, any video people click on plays in that language, and so there’s no confusion. It gives that virtuous cycle with the algorithm you want on a video.” Source: Creator Insider.

What to do at this step: launch the translated video, but also localize the next clickable path around it: title, description, subtitle track, thumbnail, and enough back catalog to support bingeing.

Step 6: Multi-language Audio vs Separate Channels: What to Choose?

Once a language starts proving itself, you need to decide how to scale it: keep everything under one channel with multi-language audio, launch a separate localized channel, or combine both.

Multi-language video on YouTube is great when you want everything under one URL, one video object, one main channel, and a lower operational load. It is efficient. It is cleaner. It can compound faster when the content is already performing.

But it also has limits.

If you need native regional SEO, a local-language homepage, local-language community building, territory-specific sponsorship logic, and a stronger standalone brand in-market, separate channels still matter.

That is exactly why AIR’s real-world answer has become: both.

On the one hand, YouTube audio tracks in different languages helped this one kids channel that we mentioned earlier to add 125.5 million views in 5 months and push dubbed tracks to 30%+ of total views.

On the other hand, AIR helped build Lady Diana’s network of 52 localized channels, which together generated 7.6 billion views across multiple regions. Those channels were not just copies with translated voice-overs. Each one was built as a standalone growth machine, with its own branding, metadata, visuals, and regional strategy.

So, yes, at this step you can choose between multi-language audio and separate channels, but our team no longer treats this as a binary choice.

Ready to stop guessing and start testing?

The fastest way to global growth is to validate what works before you overspend on the wrong market. Contact us, and we’ll help you choose the right videos, languages, and next steps.

The Hybrid Model: When Both Work Better Together

The most interesting results often appear when those two systems start feeding each other.

One of the smartest AIR insights from recent channel work is something most creators still do not think about enough: dubbed audio does not have to stay locked to one channel.

If you have already produced a strong Spanish dub for your Spanish channel, that same audio asset can sometimes be added as a multi-language track on your German, Polish, or main channel too.

That matters because Spanish-speaking viewers do not only exist in “Spanish markets.” They exist across your whole ecosystem.

AIR calls this cross-seeding.

Instead of growing every localized channel in total isolation, you let successful language assets travel across the rest of the system.

The result has been powerful: channels that cross-seed dubbed tracks across their ecosystem see an average +45% increase in views compared to channels run independently without shared tracks.

The clearest example is Vania Mania Kids.

AIR helped build dedicated Spanish, German, and Polish channels that grew strongly. Then the team tested a separate Portuguese channel, but it did not take off.

So instead of forcing it, AIR added Portuguese as an MLA track to the already successful channels.

Result: 5 million additional views in six months from a Portuguese-speaking audience that had previously been missed.

Step 7: Read the Right Metrics Until the Translated Video Crosses 100K — Then Scale the Winner

A translated video does not tell you much on day one.

On YouTube, translated assets often need a few weeks for the recommendation system to find the right viewers. AIR’s AVD analysis shows that new dubbed tracks are often served broadly at first, and only later become more precise as the algorithm learns who responds. That is why a weak-looking first week does not always mean the translation failed.

So what should you watch?

The first metric is whether traffic starts shifting toward Suggested Videos and Browse Features. In the 125M-view kids case, that shift was a major turning point: after the premiere plus back-catalog localization, traffic rolled in from Suggested and then Browse. That is what made the curve compound.

The second metric is retention in context. Our AVD breakdown shows that device mix can dramatically change what “good” looks like. A Spanish track at 8:52 and a Hindi track at 6:18 might look like a dubbing problem until you realize Spanish traffic is TV-heavy while Hindi traffic is overwhelmingly mobile-heavy. So, compare AVD by device and by market, not just as one headline number.

The third metric is monetization. We recommend calculating MLA revenue manually by matching geography-level CPM with view counts by audio track, because YouTube Studio still does not provide a one-click MLA revenue total.

What to do at this step: track Suggested/Browse growth, AVD in context, and country-level monetization. Then scale the first market that proves it can hold attention, not just attract clicks.

Do Not Guess Your Way Into Global Growth

The smartest way to get 100,000 views on a translated video is to test what already has potential, see where the first signal appears, and scale only what proves itself.

That is exactly how AIR approaches channel localization.

We start with the videos that already have proof of life. We use metadata and subtitles to uncover demand. We track where views, retention, and monetization begin to move. Then we help creators decide what deserves dubbing, MLA, separate channels, or a hybrid structure.

That is how one channel can get a new market without wasting budget on ten dead ones.

For a practical first move, our AI Metadata Translation tool is the cleanest entry point: it translates YouTube titles and descriptions into 200+ languages, and AIR says the first 100 metadata translations are free.

Or just contact us, and we will review your channel, spot the strongest videos and markets to test first, and build the right translation path around them. That way, you can move toward global growth with real signals instead of guesses.